Web App

Recipe chat with context

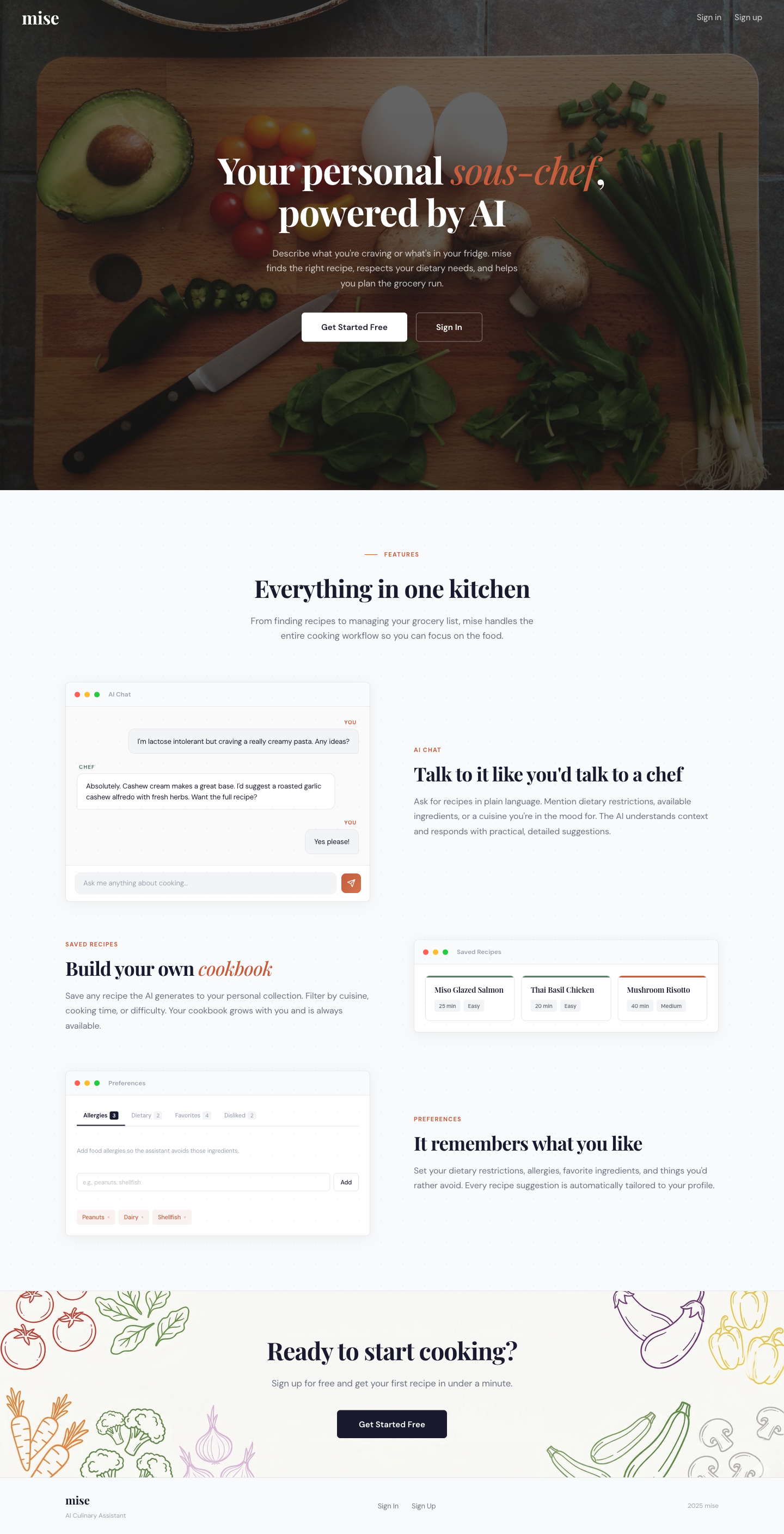

mise

A recipe chat backed by Llama 3.3 70B. Each request is wrapped with the user’s saved dietary profile and current fridge contents so suggestions respect allergies, avoid duplicates, and favor ingredients that are about to expire.

Landing page · scroll to see the full capture

Motivation

A chat with an LLM has no memory of what you’re allergic to or what’s in your fridge

Each call to Together.ai is stateless. Any personalization has to be re-assembled on the server for every chat turn. mise stores a per-user dietary profile and a fridge inventory in MongoDB, and the /api/chat handler reads both before calling the model.

The system prompt labels allergies as a hard constraint the model must never suggest, dietary restrictions as rules to respect, and favorites/dislikes as soft preferences. Fridge items within three days of their expiry date are tagged so the model prioritizes them.

Demo

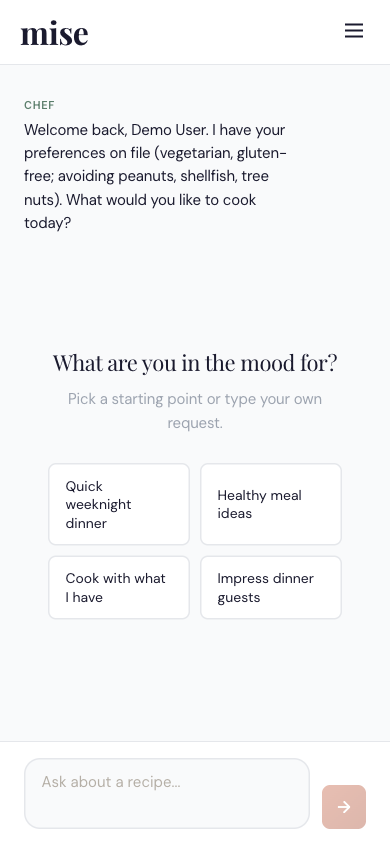

Mobile

Surfaces

01

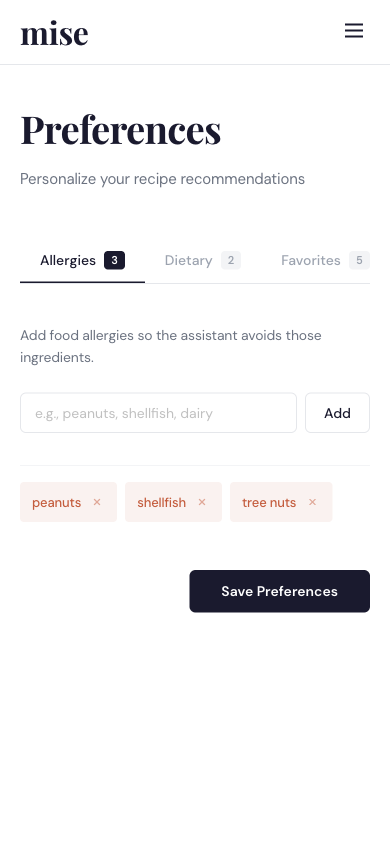

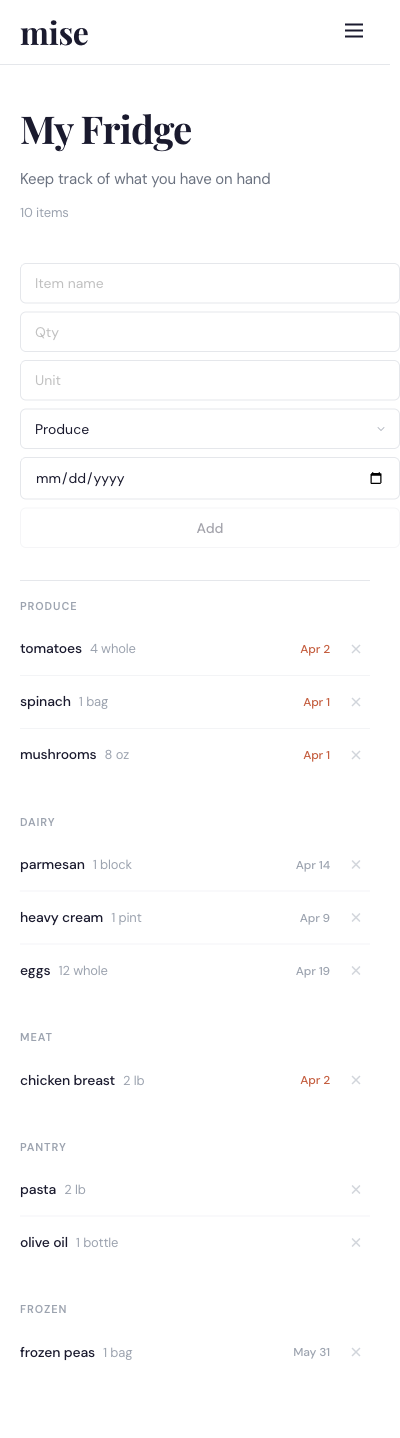

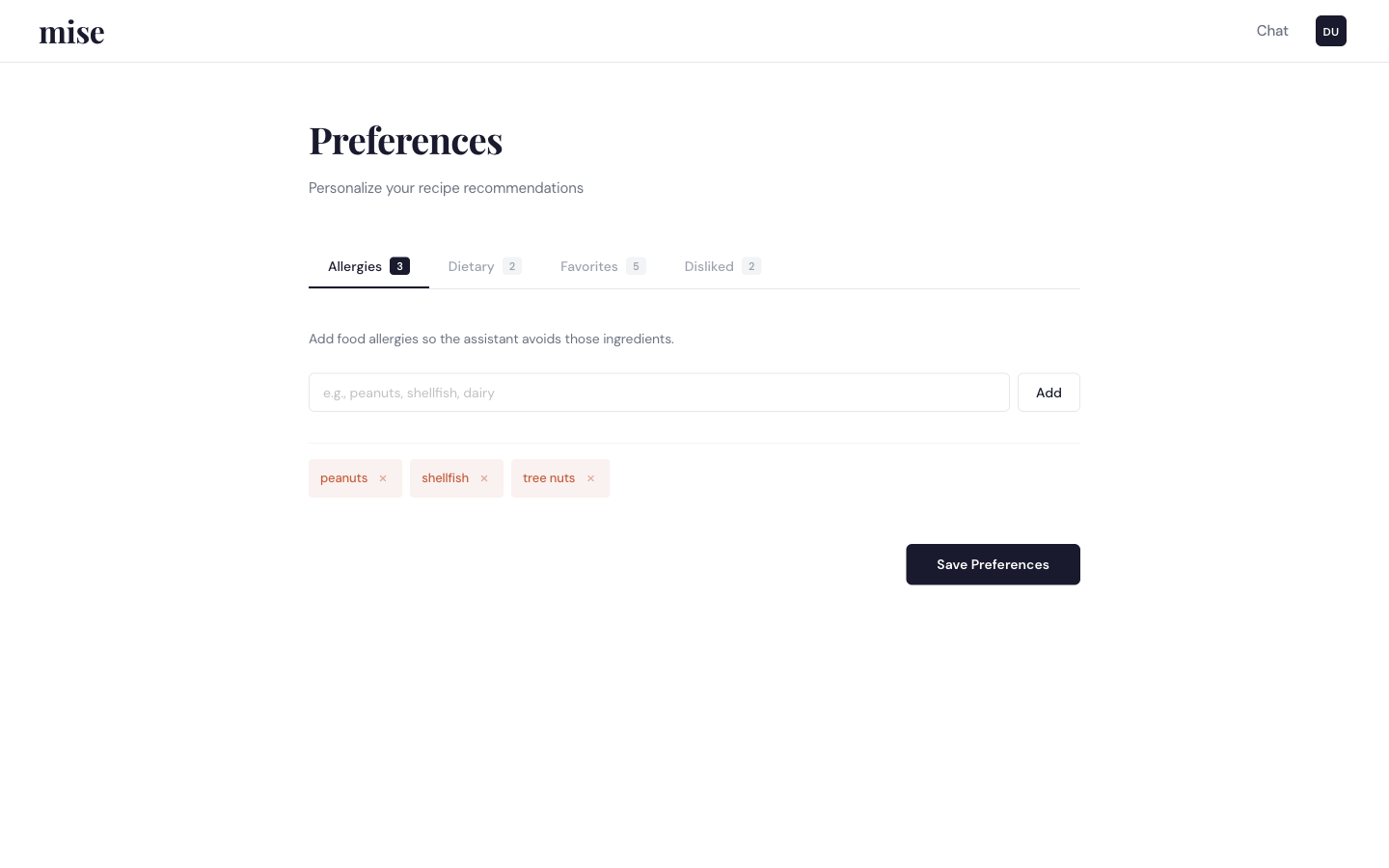

Dietary profile

Four tabbed lists on the user document: allergies, dietary restrictions, favorite ingredients, disliked ingredients. Each list is a plain [String] field on the User schema and is read on every chat request.

02

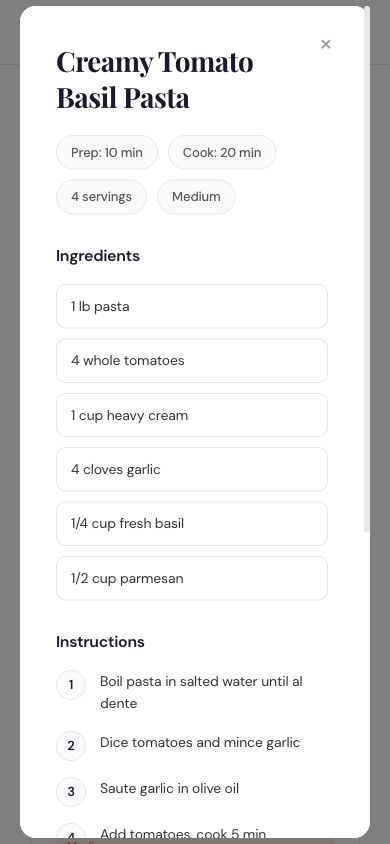

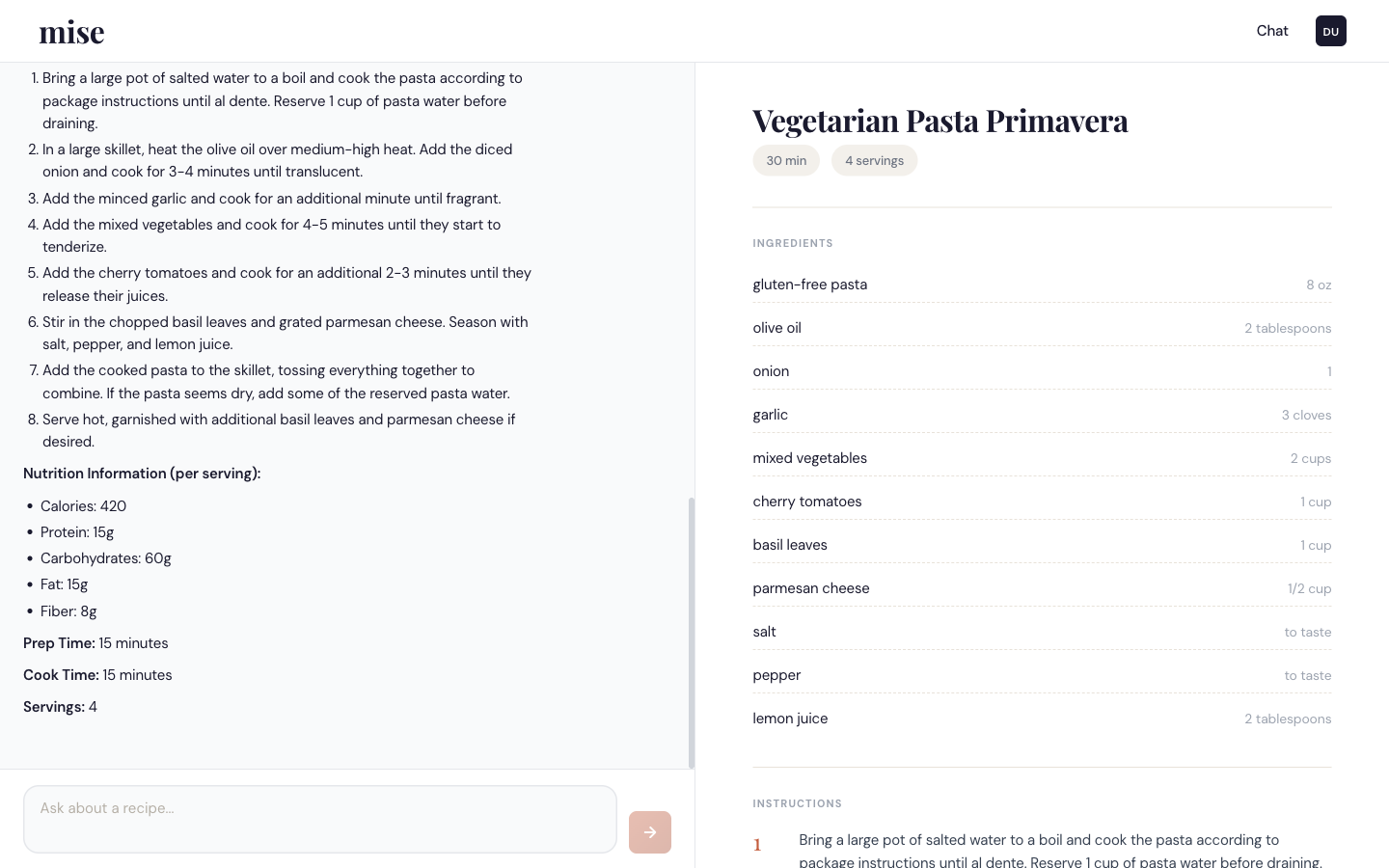

Chat with a recipe side-panel

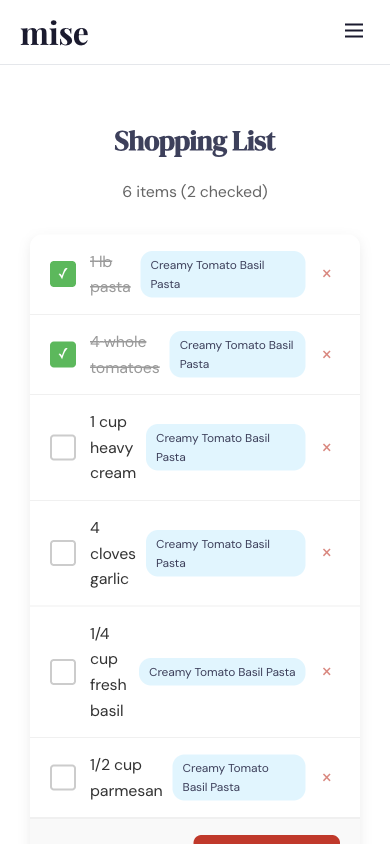

The model returns plain text followed by a STRUCTURED_DATA JSON block. The client strips the block before rendering the message and uses the parsed object to populate option pills, a recipe card in the right panel on desktop, or a bottom sheet on mobile.

03

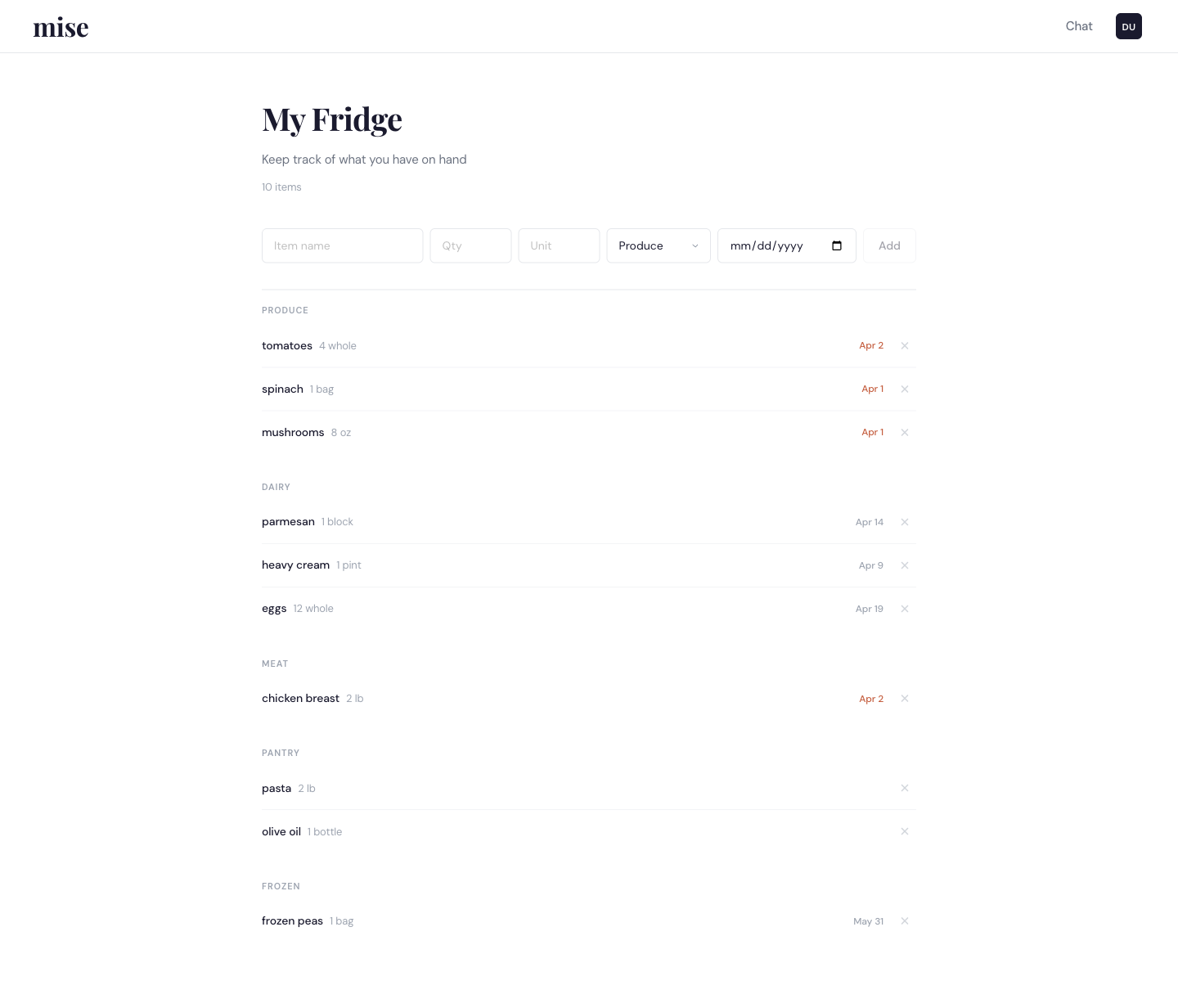

Fridge and shopping list

Fridge items carry a name, quantity, category, and optional expiry date. Anything within three days of expiring is flagged in the UI and in the system prompt. When a recipe’s ingredients are pushed to the shopping list, each item is compared against the fridge and marked inFridge if the name matches.

Technical Deep Dive

Architecture

System prompt assembly

The chat route loads the user’s preferences and fridge subdocuments with a single .select() projection, then hands both to processCulinaryChat in llamaService.js.

The service builds two text blocks and appends them to a fixed system message. Allergies are labeled CRITICAL with an explicit instruction to never suggest them, dietary restrictions are stated as must-respect, and favorites/dislikes are soft signals. Fridge items are grouped by category, and any item whose expiryDate is within three days is tagged [EXPIRING SOON].

llamaService.js — preference block

const parts = [];

if (userPreferences.allergies?.length) {

parts.push(

`ALLERGIES (CRITICAL — NEVER suggest): ${

userPreferences.allergies.join(', ')

}`

);

}

if (userPreferences.dietaryRestrictions?.length) {

parts.push(

`DIETARY RESTRICTIONS: ${

userPreferences.dietaryRestrictions.join(', ')

}`

);

}

// favorites / dislikes appended

// as soft-preference lines

llamaService.js — 429 handling

} catch (error) {

if (error.response?.status === 429) {

retries++;

if (retries > maxRetries) break;

const retryAfter =

error.response.headers['retry-after']

? parseInt(

error.response.headers['retry-after']

) * 1000

: Math.pow(2, retries) * 1000;

await new Promise(r =>

setTimeout(r, retryAfter)

);

} else {

break; // falls through to fallback

}

}

Together.ai wrapper

Together.ai’s free tier caps requests at 5 per minute and occasionally returns 429. processChat sits behind four small pieces that together make the endpoint usable from a live UI:

- LRU cache: keyed on a JSON hash of the message list and parameters. Max 100 entries, 30-minute TTL. Identical prompts skip the API.

- FIFO queue: enforces a

60000 / RPMminimum interval between outbound calls so bursts don’t trigger 429 in the first place. - Retry on 429 only: up to 3 retries with 2s / 4s / 8s backoff, or whatever the

Retry-Afterheader specifies. Other errors break out immediately. - Fallback: a rule-based handler that keyword-matches recipe / cook / make in the last user message and returns a structured options payload the chat UI can still render.

Shopping list cross-reference

When the client posts a recipe to POST /shopping-list/recipe/:recipeId, the controller loads the user’s fridge and compares each recipe ingredient by lowercased name. Matches are persisted on the shopping list item as inFridge: true, which the UI uses to show a strike-through pill.

The match is a plain string equality, not fuzzy. “chicken breast” in the fridge does not match “chicken” in the recipe; this is a known limitation.

shoppingListController.js — addFromRecipe

const user = await User.findById(req.userId)

.select('fridge').lean();

const fridgeNames = (user?.fridge || [])

.map(item => item.name.toLowerCase());

const newItems = recipe.ingredients.map(ing => ({

ingredient: ing.ingredient,

amount: ing.amount,

unit: ing.unit || '',

checked: false,

inFridge: fridgeNames.some(

n => n === ing.ingredient.toLowerCase()

),

fromRecipe: recipe.name,

}));

llamaService.js — extract trailing JSON

const m = result.match(

/STRUCTURED_DATA: ({.*})/s

);

let structuredData = null;

let cleanedResponse = result;

if (m) {

try {

structuredData = JSON.parse(m[1]);

cleanedResponse = result

.replace(/STRUCTURED_DATA: ({.*})/s, '')

.trim();

} catch (e) {

// prose still renders;

// structured UI is skipped this turn

}

}

Trailing-JSON output protocol

The system prompt tells the model to end every reply with a line of the form STRUCTURED_DATA: {...}. The service extracts that tail with a /s-mode regex, parses it, and strips it from the prose.

Four response shapes drive the UI:

options· pill row for picking a dishrecipe· ingredient list, steps, nutrition, timingcustom_step· one step of a six-stage guided flow (nutrition → base → protein → vegetables → seasonings → method)general· free-form answer, no structured UI

If the tail JSON is malformed, the try/catch drops the structured data and the prose still renders.

Tech Stack

| Layer | Technology | Role |

|---|---|---|

| Frontend | React 19 | UI framework |

| Styled Components | CSS-in-JS | |

| Framer Motion | Animations and sheet transitions | |

| Backend | Node.js + Express | API server |

| Mongoose | MongoDB ODM | |

| express-validator | Input validation | |

| Winston | Structured logs | |

| AI | Together.ai | LLM provider |

| Llama 3.3 70B Instruct Turbo | Language model | |

| Database | MongoDB | User profile, fridge, recipes, shopping list |

| Auth | JWT + bcrypt | Authentication |